The Constraint Behind AI-Augmented Cyberattacks and Human-Machine Collaboration

Recent developments in artificial intelligence have accelerated the evolution of cyber threats, making attacks faster and more sophisticated than ever before. This shift matters now because defenders face a rapidly changing landscape where traditional security measures struggle to keep up with AI-enhanced tactics.

How AI Amplifies Cyberattack Capabilities

Artificial intelligence has not replaced hackers but has significantly amplified their capabilities. By automating repetitive tasks and enhancing precision, AI enables attackers to scale operations and evade defenses that were once effective. This transformation allows cybercriminals to conduct faster, smarter attacks that slip past conventional security layers.

Despite AI’s speed and automation, human hackers remain essential in directing these operations. They provide the strategic judgment necessary to select targets, adjust tactics, and refine AI tools. This collaboration between human insight and machine efficiency creates a formidable threat that challenges defenders.

The timing of this development is critical as cyberattacks become more frequent and complex, demanding that security teams adapt quickly to the new reality of human-machine collaboration in cybercrime.

AI-Driven Social Engineering and Malware Evolution

One of the most striking impacts of AI is its ability to mimic human behavior online, which has transformed social engineering attacks. AI-generated personas craft convincing digital identities using synthetic resumes and current trends, such as remote work, to bypass identity verification processes. This level of phishing personalization turns social engineering into a precise tool rather than a blunt force, increasing the success rate of breaches.

Malware has also evolved through AI’s influence. Attackers now deploy polymorphic malware that changes its code dynamically to avoid detection by signature-based defenses. However, AI does not operate in isolation; human operators continuously adapt and refine these malicious programs to navigate unpredictable digital environments. This interplay highlights the necessity of human expertise alongside AI-driven malware adaptation.

Challenges in Attribution and the Spread of Hybrid Threats

The distinction between criminal syndicates and nation-states is increasingly blurred as AI tools and tactics become widely shared. This fusion complicates attribution efforts, making it difficult to identify the true source of attacks. Critical infrastructure such as hospitals, transit systems, and government agencies often become targets in this tangled web of shared capabilities.

At the same time, AI-generated fake content floods digital channels, doubling in volume within a year. This surge in digital impersonation and disinformation fuels geopolitical tensions and undermines public trust. The hybrid threat landscape demands new approaches to both attribution and response strategies.

These developments underscore the urgency of addressing cybersecurity governance to manage the complex motives and methods that AI-augmented attackers employ.

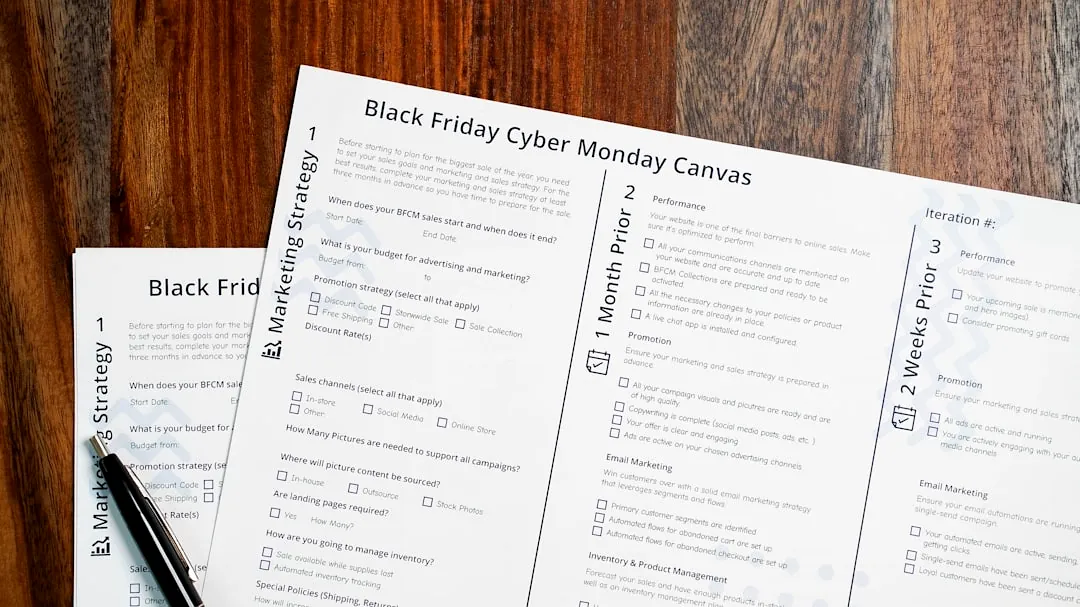

Below is a comparison of key attributes distinguishing traditional cyber threats from AI-augmented attacks.

Comparison of Traditional vs. AI-Augmented Cyberattacks

| Aspect | Traditional Cyberattacks | AI-Augmented Cyberattacks |

|---|---|---|

| Attack Speed | Manual and slower | Automated and rapid |

| Social Engineering | Generic, less personalized | Highly personalized with AI-driven personas |

| Malware | Static signatures | Polymorphic and adaptive |

| Attribution | More straightforward | Obfuscated by shared AI tools |

| Defensive Response | Rule-based detection | Requires AI-powered analysis and human oversight |

Defensive Trade-offs in AI-Powered Cybersecurity

Defenders now wield AI tools that analyze malware, triage alerts, and uncover hidden attack patterns faster than human teams alone. These automated threat detection systems enhance response times and improve accuracy in identifying complex attacks. However, these AI defenses are not infallible and require constant tuning to maintain effectiveness.

Overreliance on AI without expert oversight introduces risks such as false alarms and vulnerabilities to adversarial AI attacks, including model poisoning or jailbreaks. This trade-off between efficiency and control demands a balanced approach that integrates AI capabilities with human judgment.

Emerging Vulnerabilities Within AI Systems

AI systems themselves introduce new security challenges. Attackers exploit AI’s flexible context interpretation by feeding adversarial inputs that cause unintended data leaks or malicious outputs. Cloud platforms hosting AI tools face supply chain attacks where corrupted components inject false data, undermining security measures.

Without rigorous cybersecurity governance—such as strict identity and access controls—AI agents risk acting beyond their intended scope. These insider threats complicate security far beyond traditional boundaries, requiring organizations to rethink their defense strategies.

Understanding these vulnerabilities is essential as AI becomes more deeply integrated into both offensive and defensive cyber operations.

Operational Realities and Future Implications

The transient nature of malicious infrastructure, such as phishing sites and command-and-control servers that often last less than two hours, forces defenders into a relentless game of cat and mouse. AI plays a role on both sides, accelerating attack deployment and defense response.

Investing in cutting-edge AI security tools is vital, but it cannot replace fundamental cyber hygiene practices. Neglecting basic controls leaves exploitable gaps that attackers eagerly target. This persistent constraint highlights the importance of maintaining foundational security measures alongside advanced technologies.

Ultimately, the fusion of AI and human hackers creates a hybrid threat landscape that defies easy detection, attribution, or response. Protecting critical systems and managing geopolitical fallout require resilient strategies that blend automation with indispensable human insight.